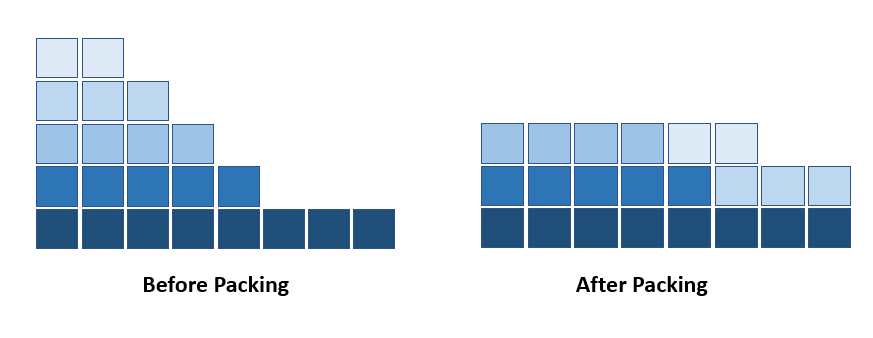

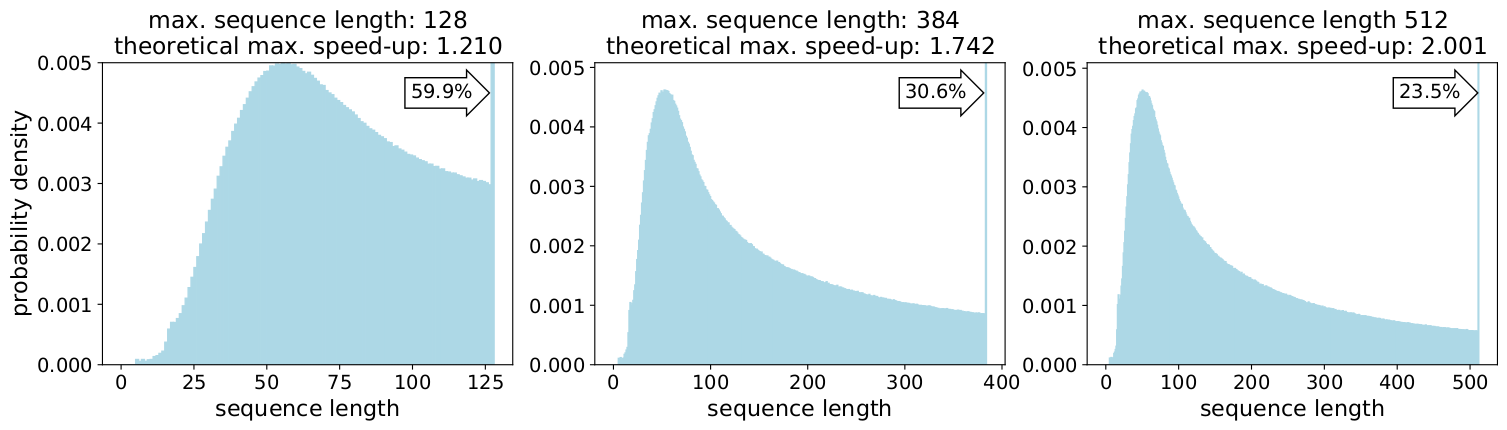

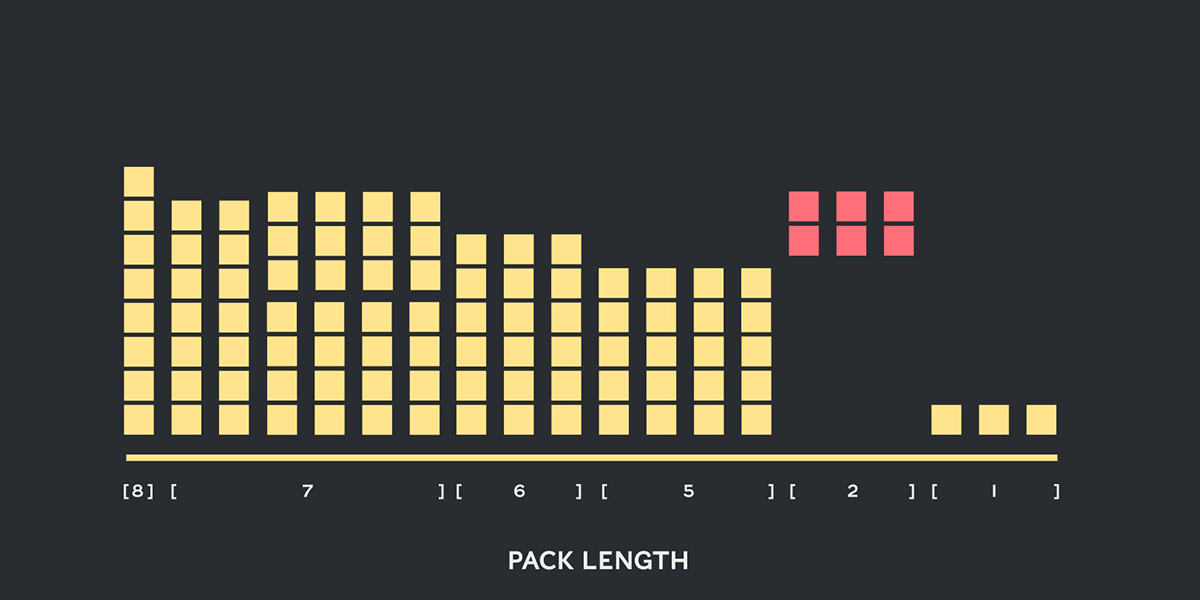

Introducing Packed BERT for 2x Training Speed-up in Natural Language Processing | by Dr. Mario Michael Krell | Towards Data Science

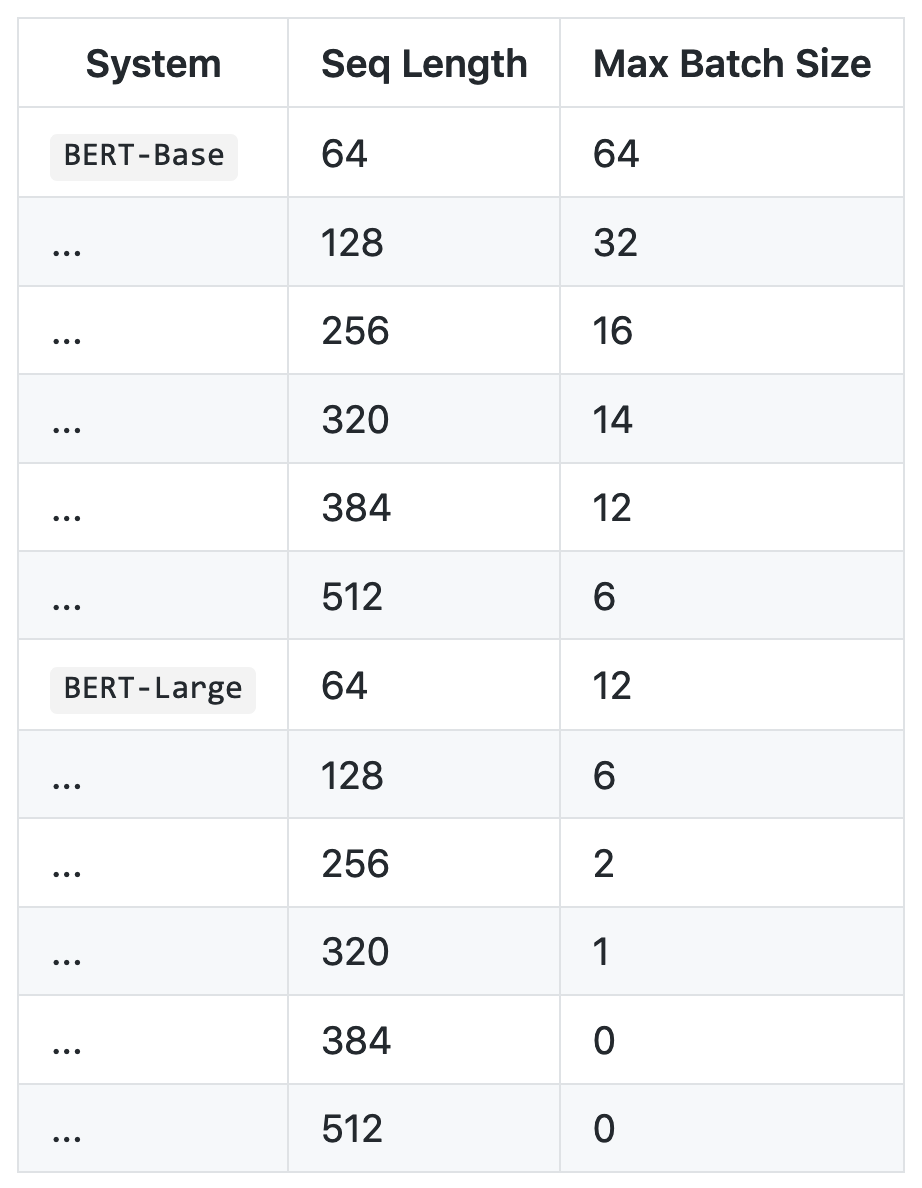

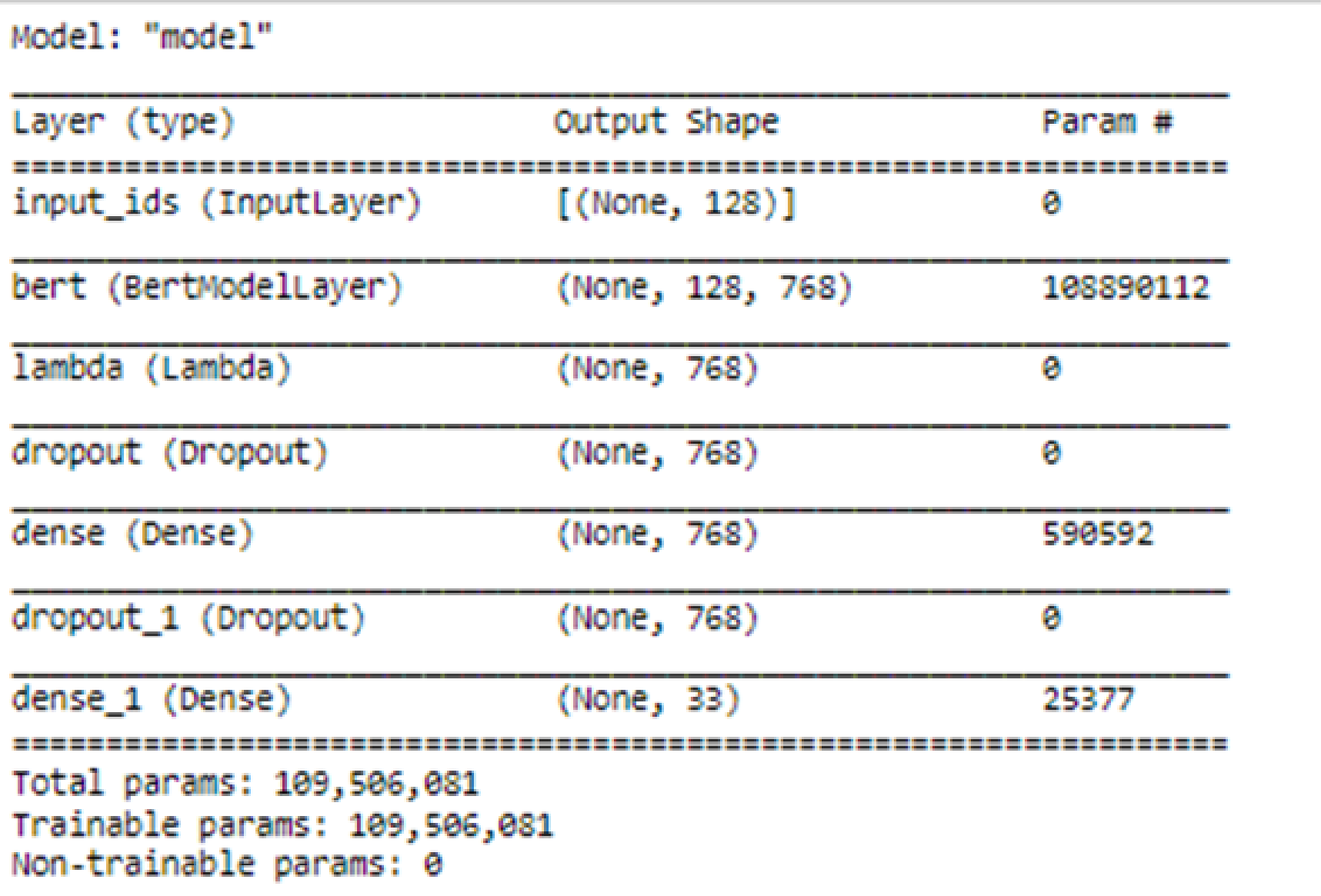

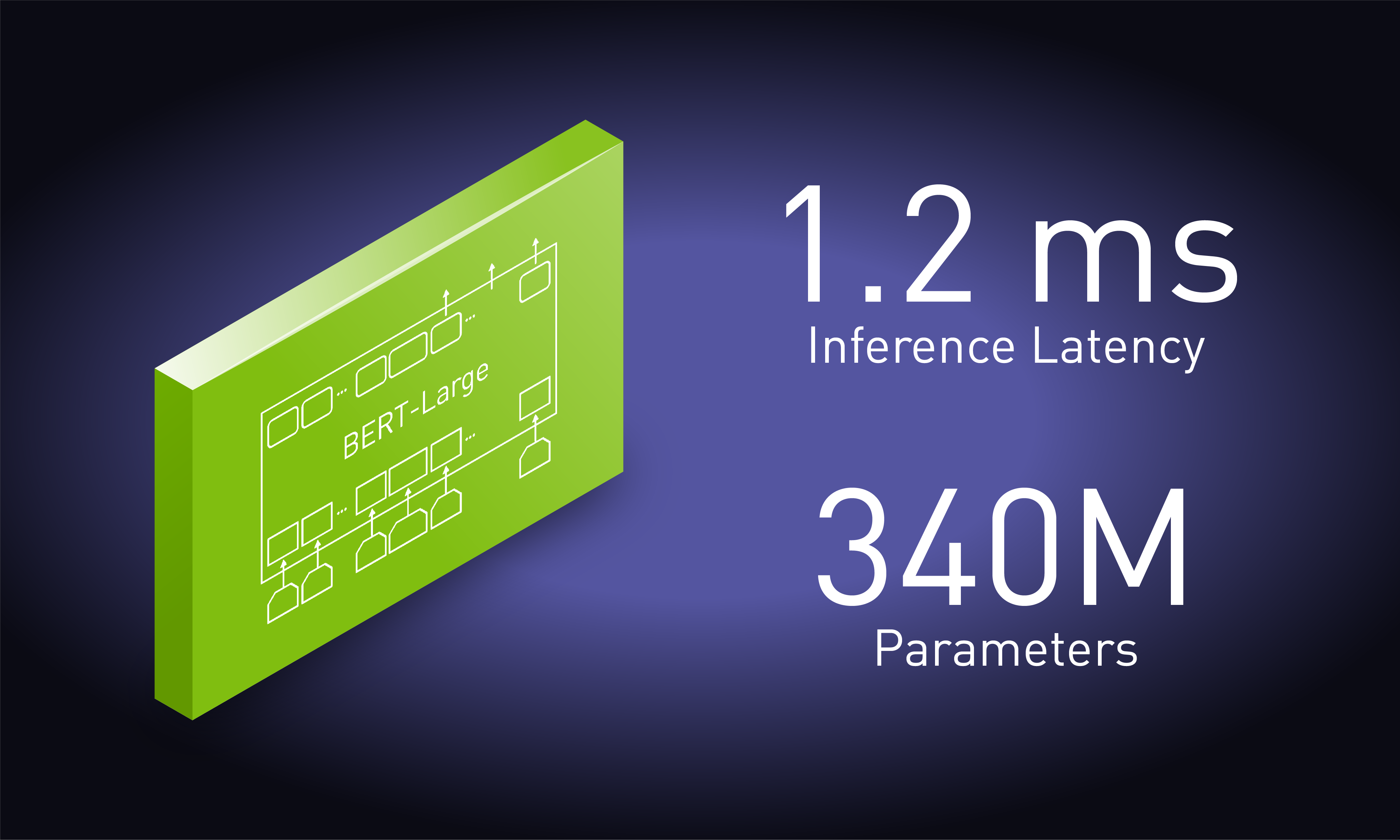

Real-Time Natural Language Processing with BERT Using NVIDIA TensorRT (Updated) | NVIDIA Technical Blog

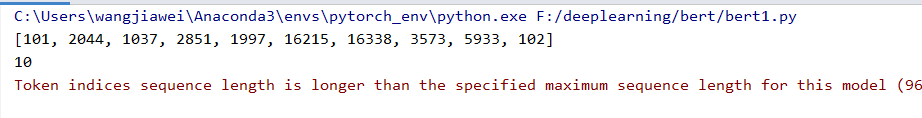

token indices sequence length is longer than the specified maximum sequence length · Issue #1791 · huggingface/transformers · GitHub

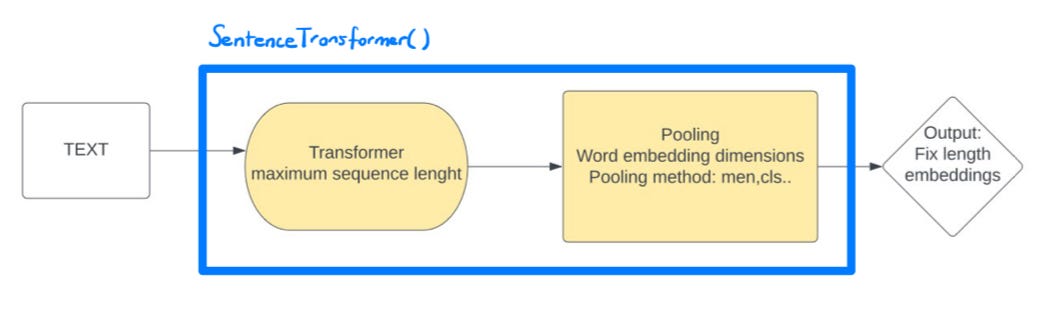

15.8. Bidirectional Encoder Representations from Transformers (BERT) — Dive into Deep Learning 1.0.0-beta0 documentation

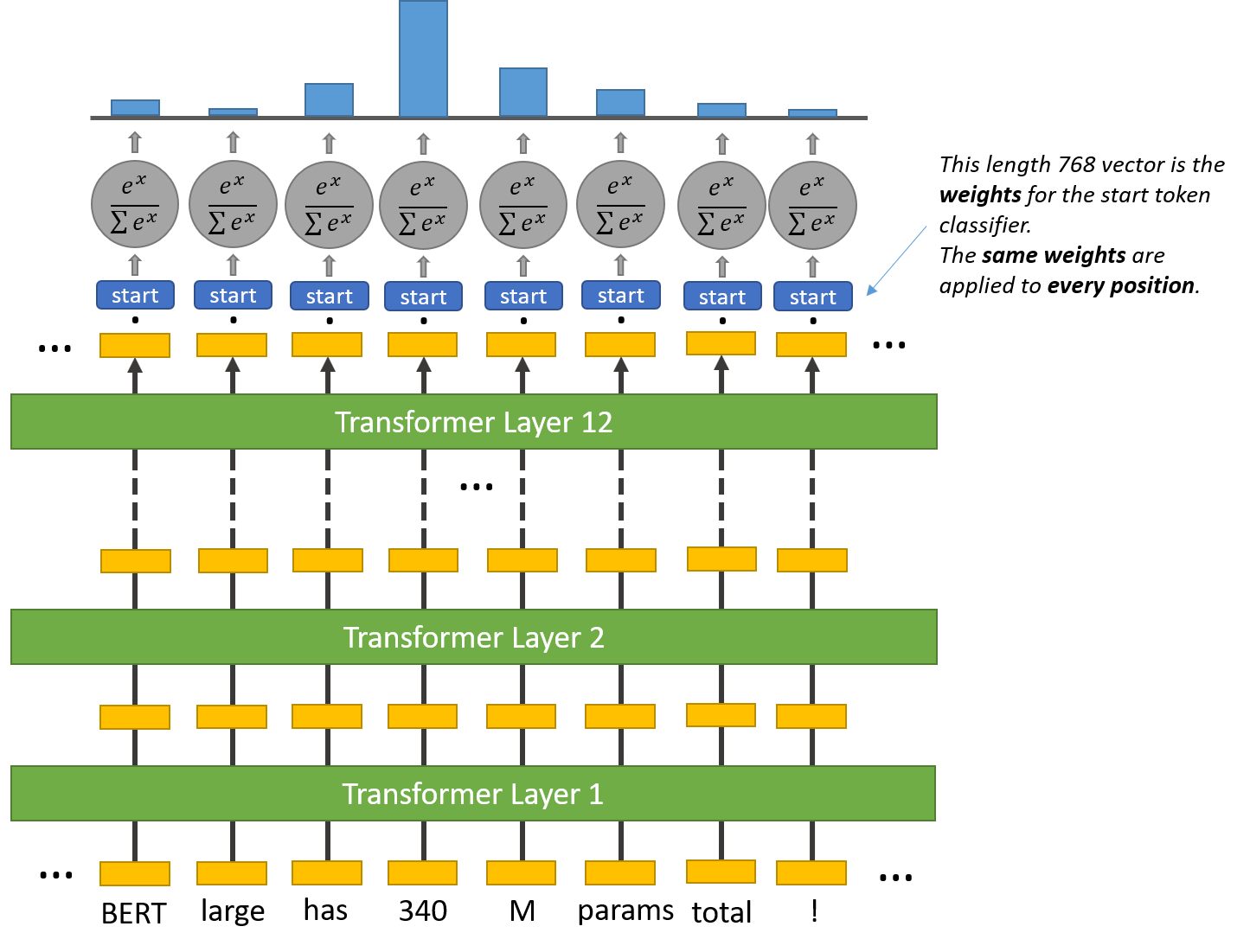

3: A visualisation of how inputs are passed through BERT with overlap... | Download Scientific Diagram